Phil Birnbaum wrote a blog piece back in 2014 about how the spread of points in predictions should always be narrower than the spread of points in real life.

Put simply, this is because the spread of points is influenced by 2 factors:

- The different abilities of the teams

- Which teams get lucky or unlucky

We can’t predict which teams will be lucky, so we can only hope to predict the spread of points which is caused by their differing abilities. As this will be less than the total spread of points, predictions should always be narrower than real life.

“How much narrower?”, I hear you cry. Well, thankfully there is a mathematical equation which helps us to determine that.

SD(total)^2 = SD(ability)^2 + SD(luck)^2

SD stand for Standard Deviation, which is a mathematical measure of how spread out the points are. In Excel you can easily calculate the SD for a set of numbers using the STDEV() function.

As we are trying to calculate the SD attributable to ability, let’s rearrange this formula and start filling in what we know.

SD(ability) = SquareRoot ( SD(total)^2 – SD(luck)^2 )

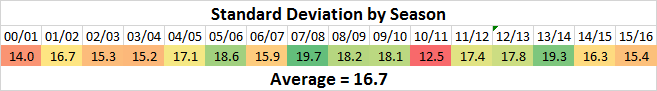

First of all then, let’s look at the total spread of points. We can do this by looking at the SD of points scored in previous seasons.

So there’s quite a range, from 12.5 in 2010/11 to 19.7 in 2007/08.

On average, the total SD in a season is 16.7.

Let’s add that to our formula:

SD(ability) = SquareRoot ( 16.7^2 – SD(luck)^2 )

SD(ability) = SquareRoot ( 278.9 – SD(luck)^2 )

So, what about the luck? How do we start to calculate that?

Neil Charles estimated it here to be around 7.4.

Using the model I built for my previous posts on the natural limits of predictions (Part 1 and Part 2), I calculate a figure of 7.1. Using a model based on betting odds, I arrive at a figure of 7.4.

These models run lots of simulations of a league with a spread of ability similar to the premier league, using H/D/A percentages derived either from historical data or betting odds, and look at the range of points that can arise. As the abilities are the same in each simulation, any variation which emerges is entirely due to luck.

As these models all produce very similar figures, let’s take 7.4 and plug it into our formula.

SD(ability) = SquareRoot ( 278.9 – 7.4^2 )

SD(ability) = SquareRoot ( 278.9 – 54.8 )

SD(ability) = SquareRoot ( 224.1 )

SD(ability) = 15.0

Finally, an answer.

This means that for predictions made at the start of a season, 15.0 is the maximum SD we would expect to see, if a model had perfect knowledge of the ability of each team. Anything more than that is probably trying to predict which teams will be lucky, which is impossible.

The SD can be seen as a measure of confidence. A low SD is an acceptance that you can’t accurately measure the abilities of the teams. A high SD shows that you are confident about the accuracy of your model. If your SD breaks the maximum, there is probably something wrong with your methodology.

So, that’s all very good for pre-season predictions. But what about predictions part way through the season? We have had 13 games played already, what sort of SD should we expect for future points at this stage?

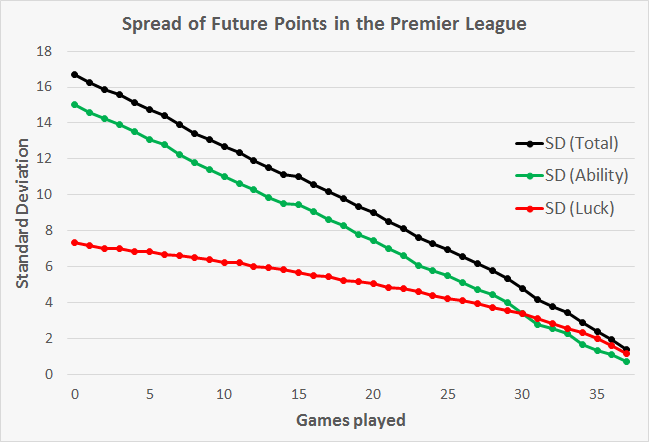

We can use the same method to calculate the maximum SD after each game, when predicting points scored in the remainder of the season. This produces the following:

The green line here represents the maximum SD we would expect a prediction to have at each stage in the season.

With 13 games played, the maximum SD value should be 9.9.

To calculate the SD of a prediction, we do the following:

1) Take the predicted final point tallies for each team

2) Deduct the number of points each team already has to get the predicted future points

3) Use the STDEV() formula on Excel on the predicted future points

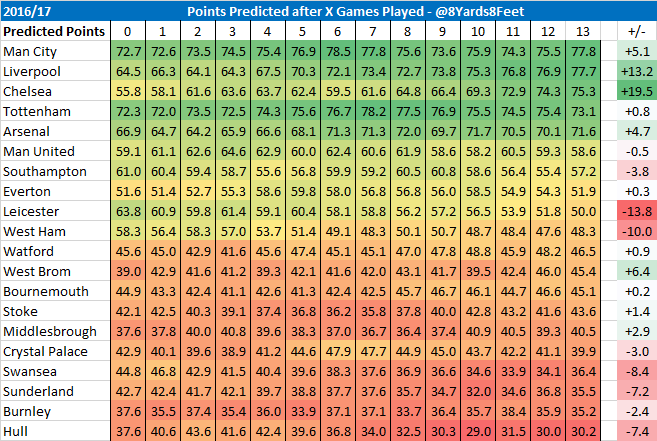

To start with, let’s look at my own predictions:

Using the above method, I deduct points already scored from each week’s predictions, and then calculate the SD of the future points at each stage.

This results in the following:

Good news, my model has remained below the green line, which represents the average maximum predictable variance.

There are 2 reasons a model might go above the line:

- There is a problem with the methodology

- This season has a larger spread of points than average

In my next post I will look at other public models to see how they compare, and try establish whether this season has a larger spread of abilities than the average season.

Much of the above methodology comes from this post by James Grayson, whose blog is well worth a read.

As ever, feedback is much appreciated.